Automated Property Valuation

Supervised ML model to predict residential assessed property values using Zillow data

Built a supervised machine learning model in Python to predict residential property valuations, applying feature engineering, model selection, and performance evaluation on real-world Zillow data.

Overview

This project addressed the challenge of accurately pricing residential properties at scale for a real estate data platform. We engineered features from raw property data, evaluated three regression models, and selected a Gradient Boosting model that achieved a cross-validation MAE of $189,297 and a test MAE of $195,927 which outperformed linear and tree-based baselines by a significant margin.

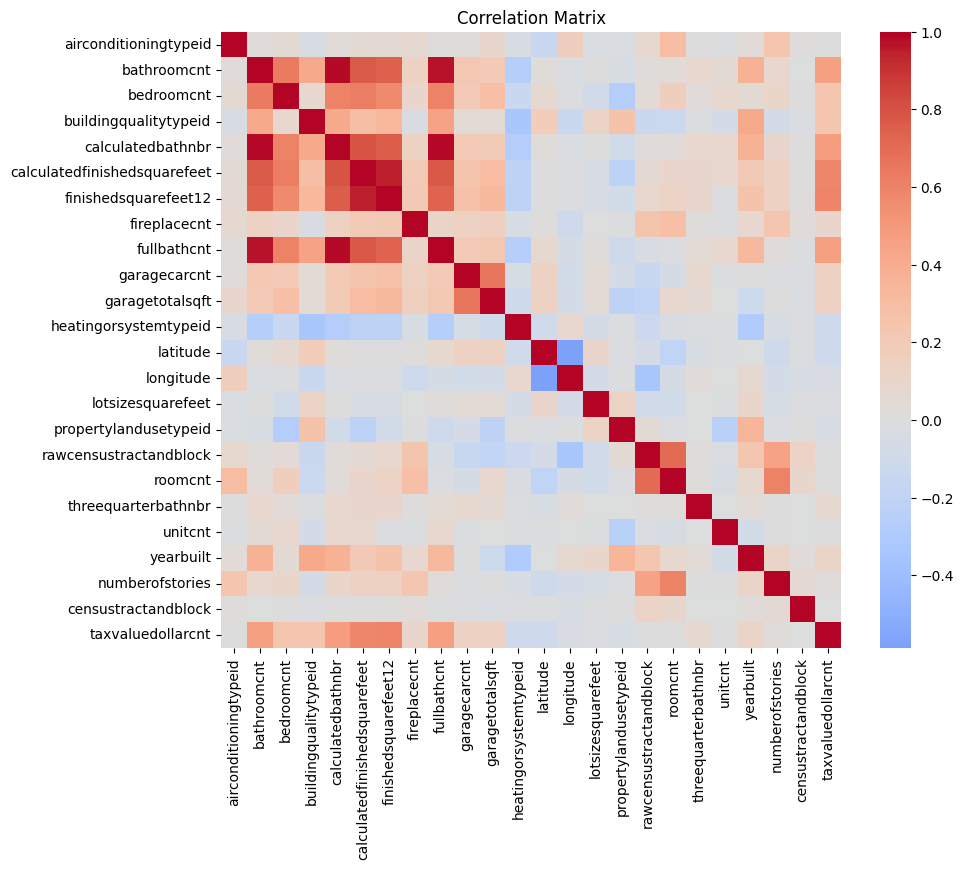

Data & Preprocessing

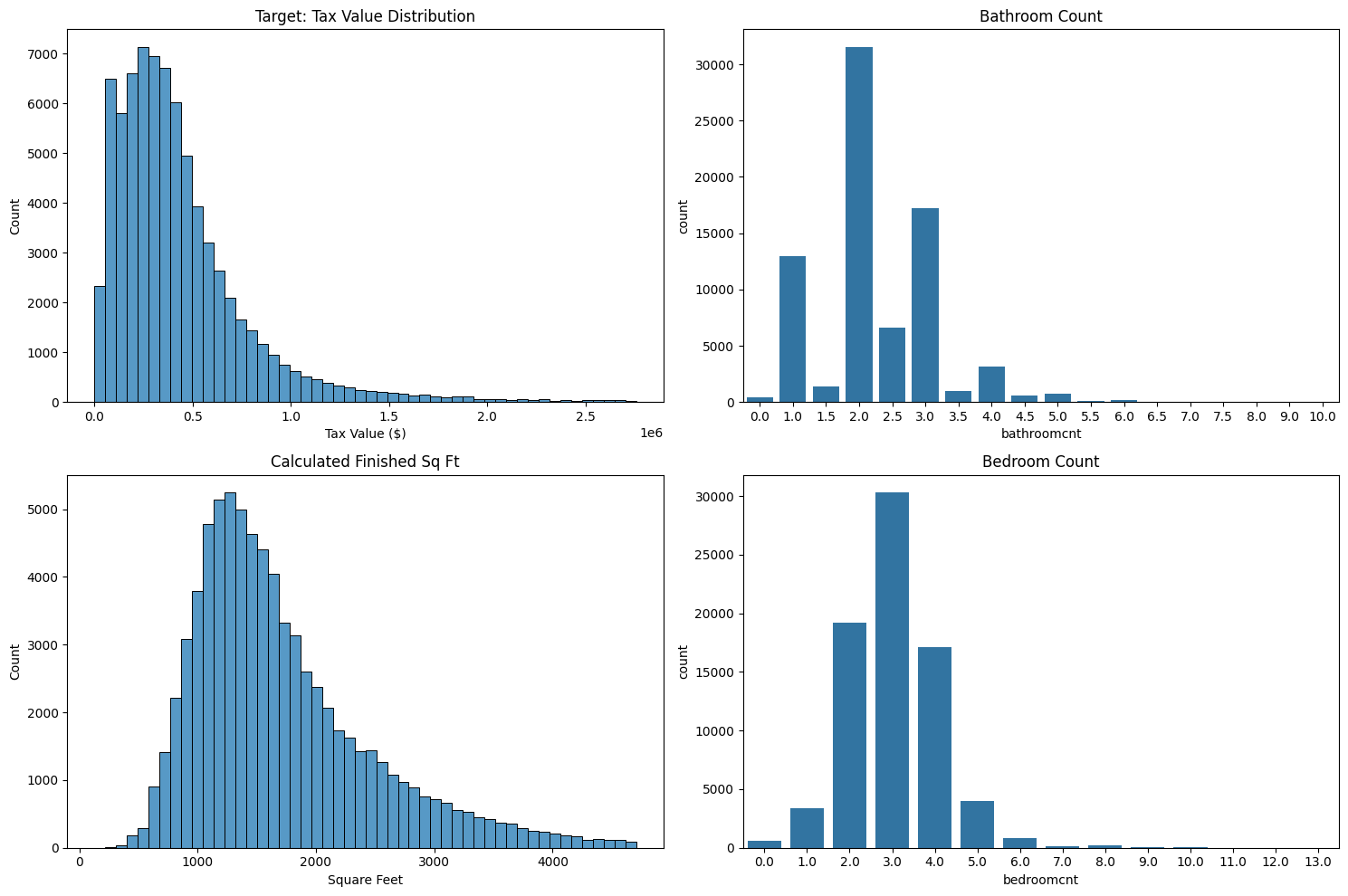

The dataset used is a subset of the Zillow Kaggle competition dataset (77,613 properties, 55 features). Preprocessing involved removing columns with >90% missing values, median/mode imputation, one-hot encoding, and outlier filtering. The target variable (taxvaluedollarcnt) was highly right-skewed, which influenced the selection of MAE over RMSE as the primary performance metric.

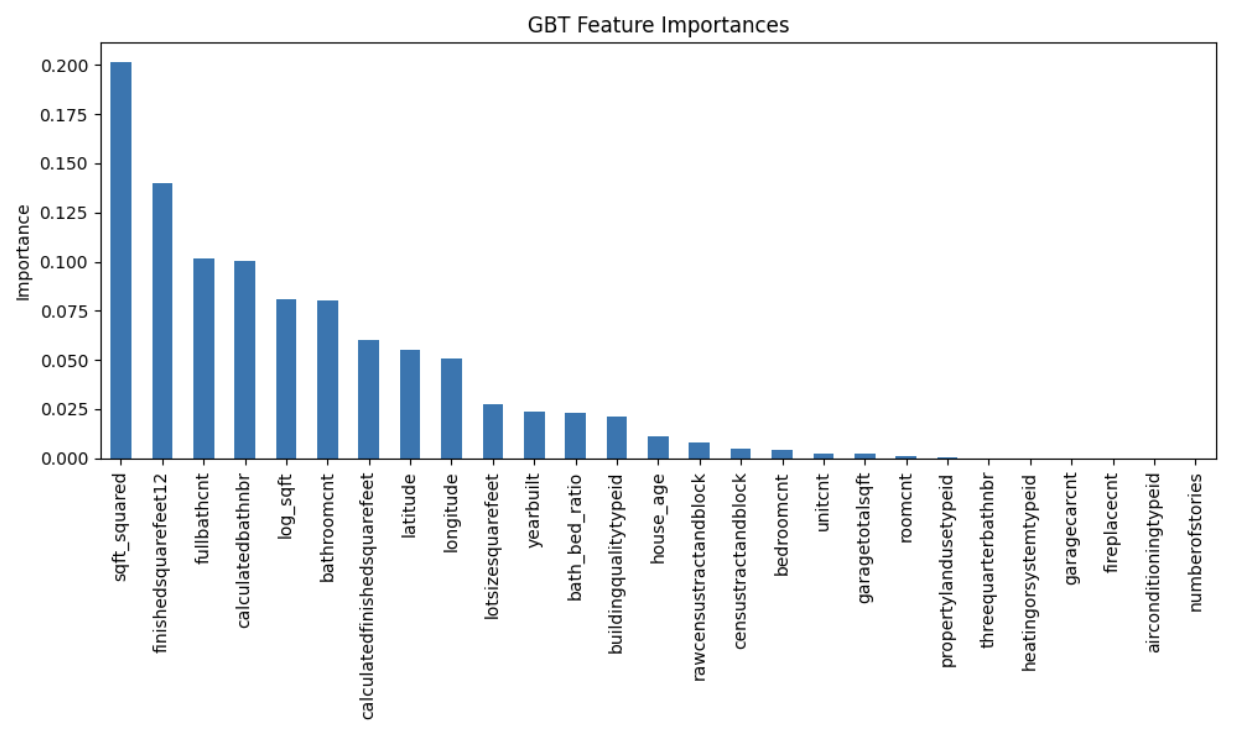

Feature Engineering

Engineered features included log-transformed square footage (log_sqft), squared square footage (sqft_squared), bathroom-to-bedroom ratio, and house age. These transformations were most impactful for Lasso Regression, reducing its CV MAE from $242k to $234k. Tree-based models did not benefit from these transformations as they handle nonlinear relationships.

Model Results

| Model | CV MAE | Test MAE |

|---|---|---|

| Lasso Regression | $233,626 | — |

| Decision Tree | $209,967 | — |

| Gradient Boosting | $189,297 | $195,927 |

Training MAE was $157,135 which indicates mild overfitting but the model was shown to still generalise well.

Feature Importance

Target Distribution & Residuals

Limitations & Next Steps

The model struggles with extreme high-value properties due to high skew in the target variable. Future work would include additional hyperparameter tuning, comparison with more complex models such as Random Forest, and enriching the dataset with neighborhood-level features (proximity to schools, walkability, comparable sales).